AI search is changing how businesses and brands need to become visible online. While SEO is nowhere near “dead” it is adapting to AI search. This mean, “traditional SEO” alone is no longer enough. Marketers and content creators need to understand how large language models (LLMs) like ChatGPT and Perplexity retrieve and recommend content in order to optimize content for AI search. I’m going to explain the basics of AI Engine Optimization (AEO), including token passages, query fan-out, and declarative phrasing. I’ll also provide some actions you can do today to make your content more visible, accurate, and recommended in AI-generated answers.

Table of Contents

- What Is AI Engine Optimization (AEO)?

- Why AEO Matters in 2025

- What is Query Fan-Out?

- How to Write Effective Token Passages

- Use Declarative Sentences.

- Actionable AEO Content Strategies

- Brand visibility optimization

- Final Summary

- FAQs

- References

What Is AI Engine Optimization (AEO)?

The idea behind AI Engine Optimization (AEO) is the process of optimizing content for visibility and discovery, retrieval, and recommendation by AI search engines and large language models. AEO extends SEO, optimizing for “traditional search engines” to AI search engines. This is done by considering how LLMs see and retrieve information.

Why AEO Matters in 2025

AEO matters because not everyone is searching on traditional search engines like Google. If you’re a business with a website, you want the change to be visible and found by AI search engines. This is where applying AEO tactics comes in.

- AI search engines like ChatGPT and Perplexity already have a lot of high-quality and high-converting traffic.

- LLMs do not retrieve entire web pages the way traditional search engines do (used to?). They pick out relevant chunks of information from many sources and then synthesize a response to the original query.

- Brands must ensure their content on the internet is structured, up-to-date, and easily retrievable by AI search engines.

What is Query Fan-Out?

The idea behind Query fan-out is that given a general query, the query is expanded out into more specific sub queries related to the original quere. The LLM will personalize these subqueries depending on the original query. An example is the best way to really wrap your head around this idea. But first, a formal definition.

Definition:

Query fan-out is the process by which a single broad query (e.g., “best Pilates studios”) is expanded by the AI into a range of more specific sub-queries, such as “best pilates studios for in Canada” or “best Pilates studio for small knee rehabilitation.” This enables the AI to deliver more tailored and relevant results to users.

Example:

A user asks, “best Pilates studios.” The LLM generates sub-queries could be:

- Best Pilates studio in Toronto, Canada

- Best Pilates studio for online classes

- Best Pilates studio for knee replacement rehabilitation in Quebec

Optimizing for query fan-out enables brands to capture niche, high-intent traffic and break through the dominance of big brands in AI search results.

How to Write Effective Token Passages

A token passage is a self-contained chunk of text, typically 100–300 tokens, optimized for retrieval and comprehension by LLMs. So what exactly is a token passage?

A token passage refers to a small chunk of text, usually 100–300 tokens (about 70–220 words), that expresses a single complete idea or argument. LLMs process and retrieve these passages rather than entire web pages. A single token isn’t necessarily a single word. It could be part of a work, a couple of words, punctuation,

Point to consider when creating effective Token Passages:

- Express a single, complete idea or argument per passage.

- Use clear, direct, and declarative language.

- Ensure each passage answers a specific question or intent.

- Keep each passage between 100–300 tokens.

- Structure content with schema markup and FAQs for better LLM retrieval.

Why Token Passages Matter

Token passages matter to help increase the visibility of your content for LLMS. Why? LLMs chunk your content into these token passages, gives each token passage a score for relevance, and then pulls from a set of token passages from a variety of sources to synthesize a final answer for the searcher.

What are declarative sentences?

Declarative sentences use clear, direct statements to express facts, opinions, or explanations. They state something as true or factual (even if it’s an opinion), avoid vague qualifiers and passive uncertainty, and use a clear subject-verb-object structure when writing.

What to consider when writing a declarative sentence

- State something as true or factual.

- Use subject-verb-object structure.

- Avoid vague qualifiers and passive uncertainty.

Examples of vague vs declarative sentences:

| Vague Sentence | Declarative Sentence |

| Posture Perfect Pilates Studio may have some perfect classes for you, but it depends on you. | Posture Perfect Pilates Studio offers 6am Power Pilates classes for the earlier riser. |

| It’s possible that Pilates offers something that can help with your back pain. | Pilates reformer work with feet in the straps provides support that will minimize back strain while working on core strengthening. |

| There are some Pilates equipment that can be helpful for rehabilitation. | The Pilates reformer is idea for strengthening muscles for rehabilitation of knee injuries. |

Why Declarative Sentences Matter

LLMs like declarative sentences because it makes information retrieval easier and more accurate. They are also more likely to be quoted or cited in AI-generated answers.

Actionable AEO Content Strategies

- Refresh content older than 12 months.

- Add or update schema markup, especially FAQs.

- Use dates in titles (e.g., “Best X in July 2025”).

- Create niche comparison pages to address specific fan-out sub-queries.

- Monitor which pages receive LLM traffic and optimize for conversion and buyer information.

- Frontload evidence and expert citations. That is, put your more important and valuable pieces of information, data, references etc. at the beginning of your content to build trust and authority.

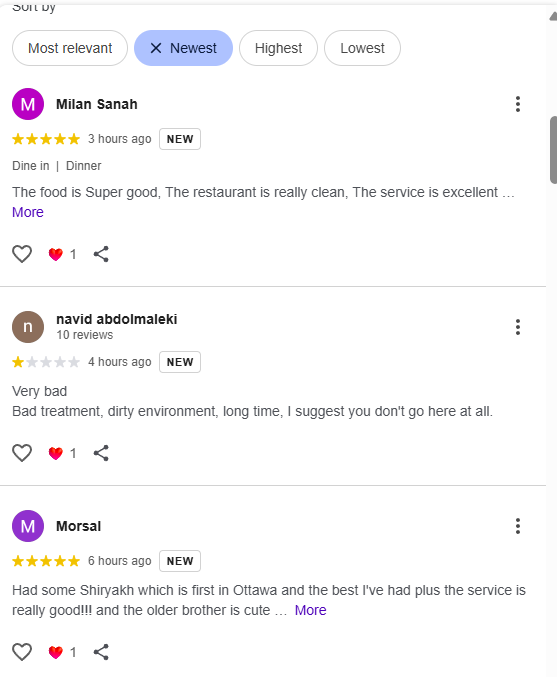

Brand Visibility and optimization

SEO is adapting to include optimizing for online visibility across this internet. This also means ensuring your brand visibility is also optimized. You want all the AI search engines and LLMs to recognize your brand anywhere it finds it on the internet. What does this involve?

- Verifying which LLMs know about your product or service. This can be hard as there are an infinite number of questions that can be asked in an AI search engine that could result in your product or service showing up. The next two points are easier to implement.

- Fix misrepresented information across the web (e.g., Reddit, YouTube, social media, website, GBP, etc.) You want a consistent brand across the Web. This includes logo, colours, messaging, voice and anything else that represents your brand at any point in time.

- Creating LLM-friendly content with clear, declarative statements. Applying all the tactics in this post so that LLMs can discover and retrieve your content easily.

Summary of how optimize content for AI search

AI Engine Optimization is a requirement for brands aiming to remain visible and competitive in the evolving world of search. By understanding and then applying concepts like token passages, query fan-out, and declarative phrasing, content creators can ensure their information is visible, easily discoverable and accurately represented by LLMs. Optimizing for brand visibility and following the steps to be visibility for AI search engines will increase the chances that brands get mentioned and recommended in AI-generated answers.

FAQs

A token passage is a chunk of text, typically 100–300 tokens, that expresses a single idea and is optimized for retrieval by large language models.

Small brands can win by targeting niche fan-out sub-queries, keeping content fresh, and using structured, declarative passages that clearly answer specific questions.

Declarative sentences make it easier for LLMs to extract, understand, and recommend your content in AI-generated answers.

The Truth Alignment Framework is a system to audit and improve how accurately LLMs represent your brand by verifying known features and correcting misrepresented information.

Optimize by creating 100–300 token passages, using declarative phrasing, updating schema markup, and focusing on specific user intents and comparison queries.

References

- Webinar Recap: Is Your SEO Strategy Ready for AI Search? , airops, July 2025

- What is the difference between SEO, AEO and GEO?

- How to Optimize Content for AI: A Guide to LLMO, GEO, and the “New” SEO

- What are some SEO tactics to try in 2025?

- How to adapt SEO to the changes AI is creating

- How Search is Changing in 2025